Quantum computing context

Di Siite isch nonig übersetzt worde. Dir luege d englischi Originalversion aa.

In the following video, Olivia Lanes steps you through the content in this lesson. Alternatively, you can open the YouTube video for this lesson in a separate window.

You began this course by diving right into running your first quantum circuit, and learning how the laws of quantum mechanics are used to create quantum states, gates, and circuits. Now, let's zoom out a bit. In this section, we will explore quantum computing through different frameworks that will help you navigate conversations, headlines, and articles about quantum computing with a more critical eye.

There is no doubt that there is a lot of excitement about quantum computing, and the possibilities this technology could offer. One might even go so far as to call it "hype." As is always the case when there is hype around a new discovery, it can be hard to tell fact from fiction. With that in mind, it is best to start with what quantum computing is not:

- Quantum computing is not going to replace traditional, classical computers — nor is it going to end up in a "quantum cell phone"

- It is not a way to "simultaneously check all possible answers at the same time"

- It is not universally better than classical computers for all tasks

- It is not in a war with AI

- It is not useless until we achieve fault tolerance or error correction

- It isn't magic

Hopefully that did not steer you away from this course entirely or make you think there is actually nothing of value here. Quite the contrary! Quantum computing has the potential to be immensely powerful — but only for certain applications. Luckily, those applications include areas of active research that could fundamentally transform the way we approach important problems, such as chemistry simulations, materials exploration, and analysis of large data sets. Before we explore these application areas, let's first dig into some of these misconceptions in more detail.

Scaling

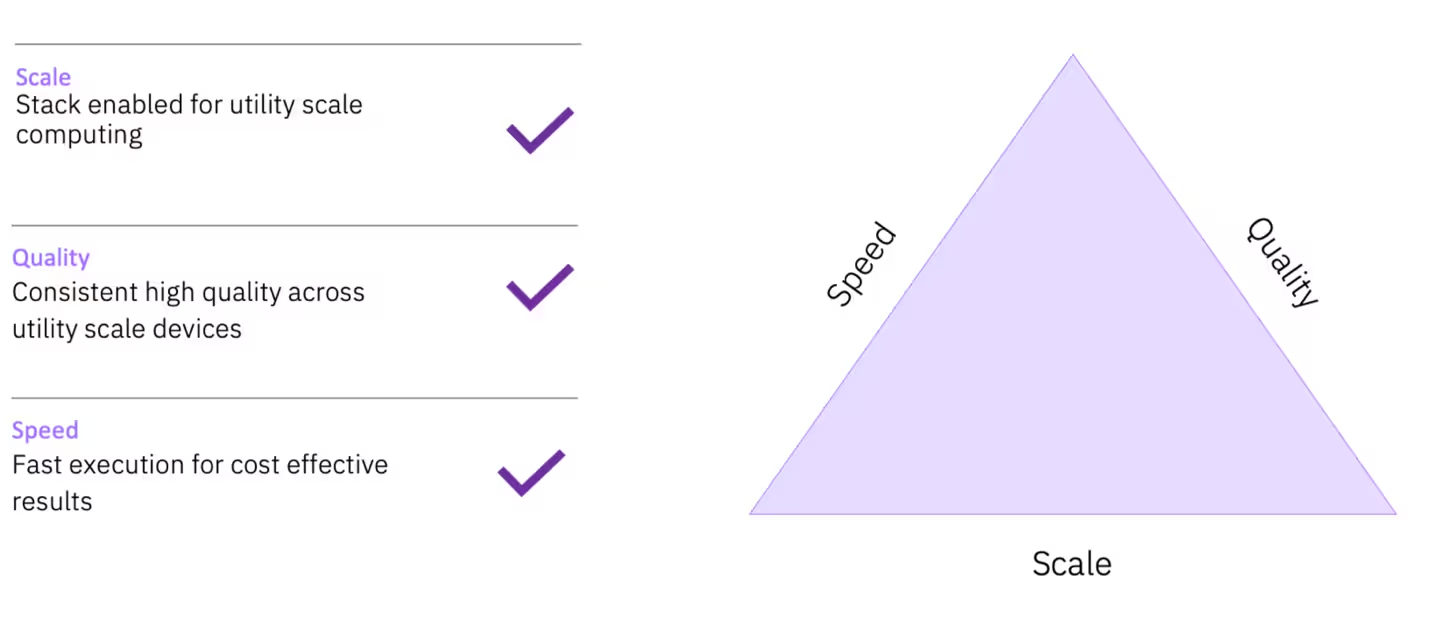

Another common misconception regarding quantum computers is that the more qubits they have, the more powerful they are. While this is not necessarily wrong, it does not paint the full picture. While scaling up in quantity is certainly a crucial element, it is no more important than quality of the qubits themselves. Quality is measured in a number of ways, one of the most important being coherence and dephasing times, or and , respectively. These are measurements of how long quantum information in a qubit can remain stable. When the first superconducting qubits were demonstrated, this number was on the order of nanoseconds (Nakamura et al., 1999); now, we regularly produce qubits that have stable coherence times of hundreds of microseconds.

Another critical component we point to when we look to see how quantum computers are improving is speed. To measure speed we use something called Circuit Layer Operations per Second (CLOPS). CLOPS incorporates both the time to run a circuit as well as the real-time and near-time classical computation, enabling it to serve as a holistic single measure of speed.

All three of these elements together are needed to continue building the path towards a fault-tolerant, universal quantum computer. That's why, when looking at the IBM Quantum® roadmap, you'll notice that some jumps between processors do not have huge increases in qubit count. For example, notice the modest qubit increase between Heron and Nighthawk, because that is not the real focus of that improvement. Instead, Nighthawk implements a new connectivity topology that will allow for different error correction codes.

Error correction versus error mitigation

Error correction remains one of the biggest long-term goals for researchers in quantum computing. It is based on the premise that qubits will always remain somewhat noisy and prone to errors, and if we want to run large-scale algorithms, like Shor's for example, we will need the ability to detect and correct for these errors in real time. There are many types of error-correcting codes, and we refer you to other courses (such as the Foundations of quantum error correction course) if you want to dive more deeply into them.

Error mitigation, on the other hand, is already being used regularly to improve quantum computing results. The idea behind error mitigation is that we accept that errors will occur, and try to predict their behavior to reduce the effects of those errors. There are many error mitigation techniques; many require multiple runs on a quantum computer plus some classical post-processing. It is unlikely that error correction will completely replace error mitigation. Instead, we predict both will be used together to return the best possible results from quantum computers.

Quantum computing components

Earlier, we mentioned that it is a common misconception that quantum computers will one day replace classical computers. This is distinctly not the case; quantum computers and classical computers are not actually at war trying to replace one another. In fact, as noted in the previous section, quantum computers need classical computers to function, for a variety of reasons. When we talk about "computers" broadly, we are usually assuming they include all components like a CPU, RAM, memory, and so on. Conversely, a quantum computer does not have all these components. Oftentimes when people talk about a quantum computer, they actually are referring to the QPU, or Quantum Processing Unit, which takes over the processing role from the CPU. The QPU itself is not a general-purpose computer. It does not run an operating system, manage memory, or handle user interfaces. Its sole role is to manipulate qubits according to carefully controlled quantum operations before returning measurement results to a classical system.

In practice, today's quantum computers are best understood as hybrid systems. A classical computer orchestrates the workflow — preparing inputs, compiling quantum circuits, scheduling jobs, and post-processing results — while the QPU executes only the quantum portion of the computation. Even as quantum hardware advances, this division of labor is expected to persist, with progress focusing on tighter integration and faster communication between classical systems and QPUs rather than eliminating classical components altogether.

Likely application areas of quantum computing

We broadly sort the areas we believe quantum computing will be most impactful into four categories: optimization, Hamiltonian simulation, Partial Differential Equations (PDEs), and machine learning.

Hamiltonian simulation

This topic is all about simulating quantum mechanical processes found in nature. At its core, it involves two broad tasks: finding the ground state energy of a system described by its Hamiltonian, which encodes the total energy and interactions within the system, and simulating how that system evolves over time (quantum dynamics).

This is one of the most natural application areas for quantum computers: quantum systems are notoriously difficult to simulate on classical computers, because the size of the quantum state space grows exponentially with the number of particles. Quantum computers, by contrast, represent quantum states directly, making them well-suited — at least in principle — for these types of problems.

Key application areas include:

- Chemistry and materials science: predicting molecular structure, reaction pathways, binding energies, and material properties

- Condensed matter physics: studying strongly correlated systems, phase transitions, and exotic quantum states

- High-energy and nuclear physics: modeling particle interactions

In the long term, advances in Hamiltonian simulation could enable:

- More accurate drug discovery and catalyst design

- Discovery of new materials for batteries

- Deeper insight into fundamental physical phenomena

Many of the most well-studied quantum algorithms, such as SQD, were developed specifically with Hamiltonian simulation in mind. As a result, this category is often viewed as one of the most scientifically compelling and theoretically grounded use cases for quantum computing.

Optimization

Optimization problems involve finding the best solution from a large set of possible solutions, subject to constraints. These problems appear across science, engineering, and industry, and often become computationally intractable as the problem size grows.

Examples include:

- Scheduling and routing (for example, supply chains, traffic flow, airline scheduling)

- Portfolio optimization and risk management (finance)

- Resource allocation and logistics

- Combinatorial problems such as graph partitioning and max-cut

Many optimization problems are categorized as NP-hard in complexity theory, meaning that classical algorithms typically rely on heuristics or approximations for large instances. Because qubits behave differently from classical bits, we can model solutions differently. This might let us explore solution spaces faster or more completely than classical algorithms.

Common quantum approaches include:

- Variational algorithms, such as the Quantum Approximate Optimization Algorithm (QAOA)

- Hybrid classical–quantum workflows, where classical solvers guide and refine quantum subroutines

While it is still an open question when — or for which problems — quantum optimization will deliver a clear advantage over state-of-the-art classical methods, optimization remains a major area of interest due to its ubiquity and the natural mapping between optimization objectives and quantum Hamiltonians.

Partial Differential Equations (PDEs)

Partial differential equations describe how physical quantities change across space and time. They underpin many of the most important models in science and engineering, including fluid dynamics, electromagnetism, heat transfer, and financial modeling.

Examples include:

- Navier–Stokes equations for fluid flow

- Schrödinger and wave equations

- Maxwell's equations

- Black–Scholes and related financial PDEs

Solving PDEs numerically on classical computers often requires fine spatial grids and long time evolutions, leading to high computational cost and memory usage.

Quantum algorithms for PDEs typically rely on the following:

- Mapping PDEs to large systems of linear equations

- Quantum linear algebra subroutines, such as the HHL algorithm and its variants

- Hybrid workflows where classical preprocessing and postprocessing surround quantum cores

In theory, certain quantum approaches can offer exponential or polynomial speedups under specific assumptions (such as efficient state preparation and readout). In practice, PDE solving is expected to be a longer-term application, closely tied to progress in fault-tolerant quantum computing and quantum–classical integration with high-performance computing (HPC) systems.

Machine learning

Quantum machine learning (QML) explores how quantum computers might enhance or accelerate aspects of machine learning and data analysis. This includes both of the following:

- Using quantum computers to explore classification problems with different classification behavior than classical algorithms

- Developing new models that are inherently quantum in nature

Proposed applications include the following:

- Classification and clustering

- Kernel methods and feature maps

- Optimization subroutines within training loops

Many QML algorithms leverage the following:

- Parameterized quantum circuits as trainable models

- Variational optimization techniques

- Quantum kernels that implicitly operate in high-dimensional feature spaces

However, machine learning is a particularly challenging area for quantum advantage. Classical machine learning is extremely mature, and quantum models must contend with issues such as data loading, noise, and scaling.

As a result, current research focuses on these areas:

- Identifying specific regimes where quantum models might outperform classical ones

- Exploring QML as part of hybrid workflows rather than standalone replacements

- Understanding expressivity, trainability, and generalization of quantum models

Quantum machine learning remains an active research area, with potential long-term impact — but also significant open questions about when and where practical advantage will emerge.

Conclusion

This lesson has made it clear that quantum advantage isn't about replacing computers. It's about expanding what's computable. It is one of the most ambitious engineering projects humans have ever attempted. And like all ambitious projects, it's messy, slow, and pretty amazing.

If you want a follow-up on how these algorithms actually work, the next lesson will show you where to go from here based on your interests and career goals.